Single-image night haze removal based on color channel transfer and estimation of spatial variation in atmospheric light

Shu-yun Liu ,Qun Ho ,Yu-tong Zhng ,Feng Go ,Hi-ping Song ,Yu-tong Jing ,Ying-sheng Wng ,Xio-ying Cui ,Kun Go ,*

a Key Laboratory of Photoelectronic Imaging Technology and System,Ministry of Education,School of Optics and Photonics,Beijing Institute of Technology,Beijing,100081, China

b China North Vehicle Research Institute, Beijing, China

Keywords:Dehazing image captured at night Chromaticity fusion correction Color channel transfer Spatial change-based atmospheric light estimation DehazeNet

ABSTRACT The visible-light imaging system used in military equipment is often subjected to severe weather conditions,such as fog,haze,and smoke,under complex lighting conditions at night that significantly degrade the acquired images.Currently available image defogging methods are mostly suitable for environments with natural light in the daytime,but the clarity of images captured under complex lighting conditions and spatial changes in the presence of fog at night is not satisfactory.This study proposes an algorithm to remove night fog from single images based on an analysis of the statistical characteristics of images in scenes involving night fog.Color channel transfer is designed to compensate for the high attenuation channel of foggy images acquired at night.The distribution of transmittance is estimated by the deep convolutional network DehazeNet,and the spatial variation of atmospheric light is estimated in a point-by-point manner according to the maximum reflection prior to recover the clear image.The results of experiments show that the proposed method can compensate for the high attenuation channel of foggy images at night,remove the effect of glow from a multi-color and non-uniform ambient source of light,and improve the adaptability and visual effect of the removal of night fog from images compared with the conventional method.

1.Introduction

Visible-light imaging systems on airborne,vehicle-mounted,and shipborne photoelectric equipment used in nocturnal military operations may encounter fog,haze,smoke,and other poor weather conditions under complex environmental lighting conditions that seriously degrade the images acquired.With the development of applications of computer vision,digital defogging technology now uses image processing methods to achieve clear images without changing the hardware of the original imaging system[1].Single-frame methods of digital defogging based on the atmospheric scattering model [2],such as the dark channel prior(DCP) [3] method proposed by He,have achieved good results on daytime scenes.However,the only source of natural light in the daytime is sunlight.On the contrary,the characteristics of lighting of nocturnal images vary with space,and artificial light sources of various colors may be present that provide poor illumination and lead to less information being extracted from the resulting images[7].Moreover,high attenuation channels are present,because of which algorithms to remove fog from images acquired at daytime are not suitable for those captured at night.

Current defogging methods for nocturnal images are often designed based on mathematical models.For example,Zhang et al.[8] proposed a method to defog nocturnal images based on an imaging model in which point-by-point change terms are used to replace constant atmospheric light and the characteristics of lighting of images acquired at night with spatial changes are considered.This method overcomes the limitation of the atmospheric scattering model but does not consider glow,because of which it cannot reflect the characteristics of glow of images acquired at night.Zhang et al.[9] used this model to propose a method of illumination compensation and color correction for foggy images acquired at night prior to fog removal to obtain an output image with balanced illumination and color correction.The method estimates ambient light in a point-by-point manner,and can deal with the characteristics of spatial variation in the lighting of images acquired at night.However,the output image usually contains halo artifacts and color distortion around the glow source.Li et al.[10] added the atmospheric point diffusion function to represent the characteristics of glow of images acquired at night and considered ambient lighting with spatial variations as well.Narasimhan et al.[11]expressed glow as the sum of the product of the shape and direction of illumination of the glow source multiplied by the corresponding glow region.

The above methods can deal with glow and spatially varying ambient lighting in images acquired at night,but the parameters of the objective function need to be evaluated based on several factors,such as the blur and depth of the scene,and the type of light source.It is difficult to obtain the values of all these parameters using only the information obtained from a single image,and some researchers have thus manually set them to constant values,which often results in a distorted output.

Liao et al.[12] proposed expressing the fog-free image as the difference between a foggy image and a fog density image without calculating the transmittance and atmospheric light to reduce the distortion caused by parameter estimation.However,the fog density map is prone to changes with the scene,and is unstable.Santra et al.[13]proposed a relaxed atmospheric light model to allow for the spatial variation of the ambient lighting.By eliminating the influence of atmospheric light on pixels and defogging point by point,this method can deal with the spatial variation of lighting and glow characteristics in nocturnal images,but it is not sufficiently accurate for images with complex structures.Pei et al.[14]used an improved DCP method for image defogging by using blurred images at night as source images and blurred images taken at daytime as reference images in Lαβ color space.The global color transfer method used in this method cannot accurately maintain the color characteristics of the image,usually introduces color distortion in the output image,and performs the color conversion between images with a global scope,without considering local changes in the characteristics of scenes of the source image.This leads to the loss of important edge details.

Ancuti et al.[15]proposed the concept of color channel transfer to remove fog from images acquired during the day.It uses a reference image derived from a source image to transmit information from the important color channel to the attenuated color channel to compensate for the loss of information.However,this method needs to be used in combination with other defogging methods,and its performance depends largely on the defogging ability of these methods.

To sum up,the physical models on which current algorithms for removing fog from images acquired at night from image vary greatly in their effectiveness,which limits the adaptability of the algorithms.This paper proposes a robust algorithm for removing fog from images acquired at night from a single image.The main contributions of this study are as follows:

First,the proposed method compensates for the high attenuation channel of foggy images at night through color channel transfer,and translates the problem of defogging images acquired at night to a defogging network for images acquired during the day to estimate transmittivity.This solves the problem of a lack of datasets of foggy images acquired at night,and reduces the color distortion of the results of defogging.

Second,the deep convolution network DehazeNet is used to estimate the distribution of transmittance,and the spatial variation-based atmospheric light model is established by combining this distribution with the maximum reflection prior and the relaxed atmospheric light theory.Atmospheric light is estimated in a pixel-by-pixel manner,and a clear image in which the effect of glow owing to non-uniform ambient lighting is suppressed to yield an improved visual effect.

We use a model of images of foggy scenes and the statistical analysis of foggy images acquired at night.We also use a digital simulation-based contrast experiment,a contrast experiment based on an empirically acquired scene,and ablation experiments on a public dataset to verify the effectiveness of the proposed algorithm.

The remainder of this paper is organized as follows: Section 2 introduces related work in the area,including the model used to represent degraded images of foggy scenes and a statistical analysis of such images.In Section 3,we illustrate the proposed method to remove night haze from single images.Section 4 details the experiments on the proposed method,and the conclusions of this study are provided in Section 5.

2.Related work

2.1.Model of degraded images of foggy scenes

The atmosphere is composed of suspended particles of gas,aerosols,small water droplets,ice crystals,large raindrops,and hail particles.These particles scatter the incident sunlight,and the effect of scattering changes under different weather conditions,that is,different concentrations of suspended particles,that degrade image quality,as shown in Fig.1.

Fig.1.Model of foggy images.

The light intensity obtained by imaging equipment in foggy environments includes incident light attenuated by scattering and atmospheric light [2].

We useI(x)=E(d,λ),J(x)=E0(λ),A∞=E∞(λ),andt(x)=e-β(λ)dto get a general model of foggy images

whereI(x)is the image received by the foggy imaging equipment,J(x)is fog-free image,A∞is atmospheric light at infinity,andt(x)is transmittance.

The model illustrates the causes of image degradation in foggy environments.Light reflected by objects is attenuated by medium scattering during propagation,and atmospheric light is scattered by the medium into a path of propagation such that it participates in imaging.WhenI(x)is considered,ift(x)andA∞can be calculated,the fog-free imageJ(x)can also be obtained by the model.This is an ill-posed problem,and certain prior knowledge is needed to obtainJ(x).

2.2.Statistical analysis of images of scenes involving night fog

2.2.1.Statistical analysis of illuminance and ambient lighting

The atmospheric light in foggy images in the daytime is evenly distributed,whereas lighting at night is mainly from an artificial source.This ambient lighting is uneven and may feature a variety of colors,as shown in Fig.2 [8].

Fig.2.Characteristics of ambient lighting in foggy images acquired at night.

It is clear that the overall brightness of the foggy image acquired at night is low,and features uneven ambient lighting of different colors-bluish,yellowish and reddish lights in brighter areas.In most models used to remove fog from images acquired at daytime,sunlight is the only source of natural light.It is thus often assumed that atmospheric light is evenly distributed.However,to establish a model to remove fog from images acquired at night,it is necessary to consider uneven brightness and ambient lighting with different colors.

To more intuitively explain how fog particles reduce the contrast of images in daytime,and the extent of decline in the contrast of foggy images with increasing haze concentration,we used the gray histogram of clear-foggy image pairs from the O-HAZE dataset[22]as an example.The contrast value was marked in the upper-right corner (the gray value of the image was normalized to the interval [0,1]).We also used the gray histogram of clear-foggy image pairs with different haze concentrations from the D-HAZE dataset[21] as an example.

Image contrast is defined as follows:

In the above,δ(i,j)=|i-j| is the gray difference between adjacent pixels,andPδ(i,j)represents the probability that their gray difference is equal to δ(i,j).The larger the value ofCis,the higher is the contrast,and the more varied are the levels from black to white.In this paper,the gray difference of four adjacent pixels was calculated.

To study the characteristics of illuminance of foggy images at night and illustrate them with examples,we selected 20 foggy images taken during the day and 20 taken at night from Yahoo’s Flickr image dataset for illuminance-related statistics(values in the V channel of images in HSV(Hue,Saturation,Value)color space),as shown in Fig.3.

Fig.3.Illuminance-related statistics of foggy images acquired during the day and night from Flickr.

Fig.4.Distribution of the standard deviation of foggy images acquired during the day and night.

Owing to the low illumination at night,the distribution of illumination of foggy images acquired at night is also significantly lower than that of foggy images acquired during the day,and contains less image-related information.Therefore,the characteristics of low illumination of foggy images at night must be considered in image defogging.

2.2.2.Statistical analysis of loss of detail in foggy images acquired at night

Due to the presence of fog particles,the reflected light of the object is scattered and attenuated,and atmospheric light participates in the imaging process through the imaging equipment.The final image is affected by attenuated light and scattered atmospheric light that leads to the loss of image detail.To quantitatively explain this loss in foggy images acquired in the daytime,we collected statistics on the distribution of standard deviation of image blocks of over 1500 clear-foggy image pairs from the OHAZE and D-HAZY datasets.

The standard deviation of the image block is defined as follows:

In the above,σ represents the standard deviation of image blocks,nis the number of pixels in the image blocks,xiis the gray value of the pixel,andis the mean value of the image blocks.

To study the loss of detail in foggy images acquired at night and illustrate them with examples,the standard deviation of image blocks of 20 foggy images taken in the daytime and 20 taken at night were selected from Flickr,as shown below.

The standard deviation of daytime foggy image is distributed in the middle,However,the standard deviation distribution of foggy images at night is in a very low range.It can be seen that,compared with foggy images during the day,the loss of detail is greater in foggy images acquired at night contains less image information.Therefore,the fog removal technology of images acquired at night has higher requirements for recovering the details of the input foggy images.

2.2.3.Statistical analysis of high attenuation channel in night foggy scenery

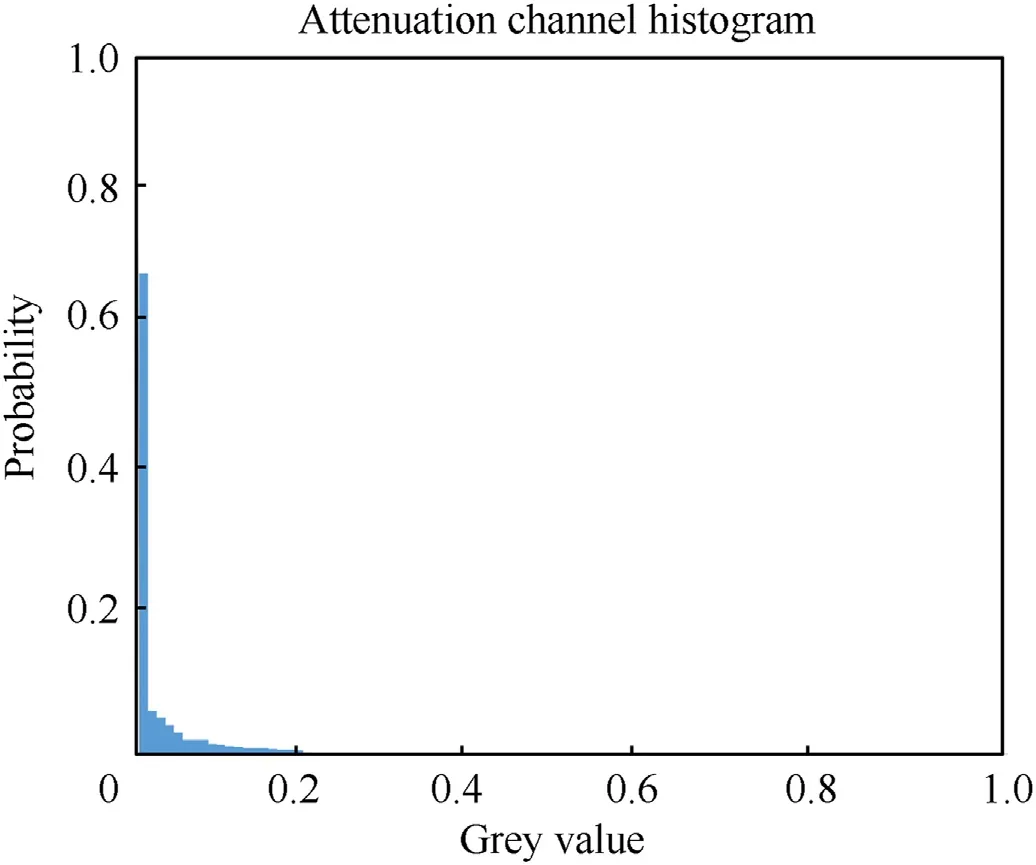

To study the attenuation in foggy images acquired at night in different channels,image pairs in the O-HAZE dataset were used to gather statistics on the distribution of the three-channel histogram of foggy and clear images in the daytime,as shown in Fig.5.More than 130 images from the dataset of images captured on foggy night,collected by Li [23],were used,as shown in Fig.6.

Fig.5.Analysis of attenuation of three channels in foggy images acquired during the day: (a),(b),(c) Three groups of clear-foggy image pairs and their corresponding threechannel histograms;Top: clear image;Bottom: foggy image;Left: image;Right: corresponding histogram.

Fig.6.Analysis of three-channel attenuation in foggy images acquired at night:(a),(b),(c),(d),(e) Five foggy image pairs acquired at night and their corresponding three-channel histograms;Left: foggy images acquired at night;Right: three-channel gray image and the distribution of its corresponding histogram.

The histogram of clear images in the daytime was distributed over a wide range while that of foggy images was narrow,and the situation of the three channels was the same.That is,in the same scene,the contrast of the image decreased with fog in the daytime,and the degree of degradation of the three channels was the same.However,foggy images at night often have a highly attenuated channel,such as the blue channel in Fig.5(a)-Fig.5(e),and the red channel in Fig.5(b).This is related to artificial lighting and haze scattering at night.

We gathered statistics on the highly attenuated channels of the dataset of foggy images acquired at night,and their histogram is shown in Fig.7.The gray value of the channel was distributed close to zero with a high probability.

Fig.7.Map of color channel distribution of foggy images at night (Li [23]).

To sum up,the characteristics of foggy images at night are as follows:

(1) They have poor illumination(as shown in Fig.3).

(2) These images at night lose a significant amount of detail (as shown in Fig.4).The standard deviation of the image block of foggy images in the daytime is distributed over a larger area,that is,they contain more details.

(3) Under uneven ambient lighting of different colors(as shown in Fig.2),although foggy images at night are dim on the whole,they contain bright areas that are biased to blue,yellow,and red.

(4) The histogram of clear images acquired in the daytime is distributed over a wide range while that of foggy images has a narrower distribution,and the attenuation of the three channels is the same (see Fig.5).

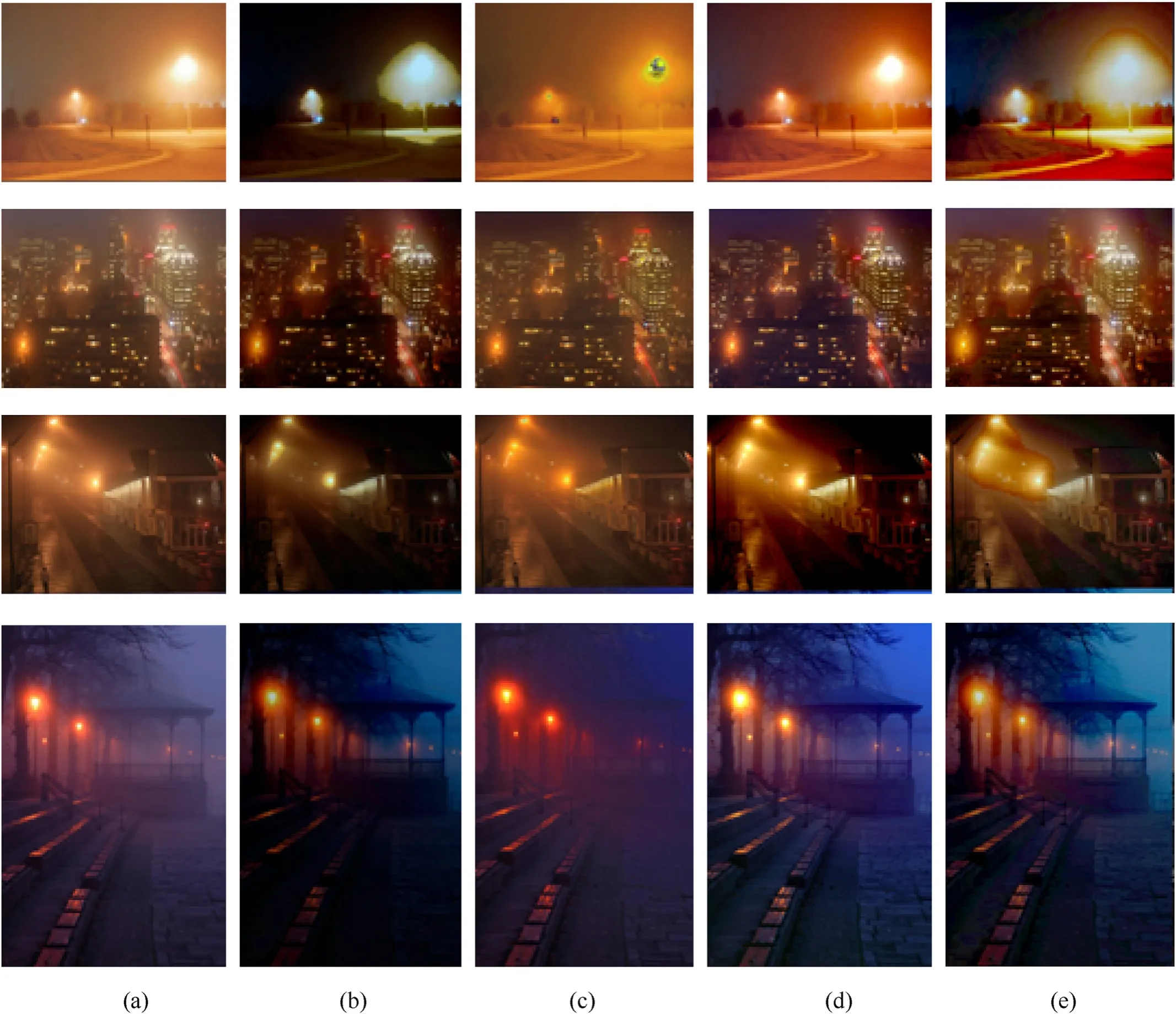

Fig.8 shows several classic ways to remove fog from images acquired during the day,such as DCP[5],boundary constraints-and context regularization-based defogging [3],non-local color priorbased dehazing (NLD) [4],and extreme reflectance channel priorbased defogging (ERC) [28].For foggy images acquired at night,these defogging methods tend to have serious color deviations.In particular,areas close to artificial lighting exhibit a partial supersaturation phenomenon,and the overall effect is not ideal.To attain defogged images at night image,it is necessary to compensate for the highly attenuated color channel according to the characteristics of the images,process the co-existing uneven and multi-colored ambient lighting,and ensure that the algorithm has good scene migration capability.

Fig.8.Failure description of method to remove fog from images acquired during the day when applied to those captured at night:(a)Foggy image acquired at night;(b)Effect of fog removal of DCP;(c) Effect of fog removal based on boundary constraints and context regularization;(d) Effect of fog removal of NLD;(e) Effect of fog removal of ERC.

3.Methods

The architecture of our proposed DehazeNet-based night fog removal method is shown in Fig.9.The color channel of input foggy image acquired at nightIs(x)are transferred into imageI(x)that approaches natural lighting conditions;thenI(x)is thrown into DehazeNet to calculate the transmittance distributiont(x)meanwhile,I(x)is also used to calculate the atmosphere light modelAλiof each pixel using the maximum reflection prior with ambient lighting characteristics of uneven brightness and different colors.The final clear and fog-free image is synthesized as follows:

Fig.9.Framework for the implementation of DehazeNet based on color channel transfer and estimated spatial variation in atmospheric light.

3.1.High attenuation channel compensation based on color channel transfer

According to the analysis in Section 2.2,foggy images acquired at night have lower illuminance,more serious loss of detail,greater spatial variation and more multi-color ambient lighting,and more highly attenuated channels than foggy images acquired during the day.

To solve the problem of high attenuation channels in foggy images at night,a CCT-based method is used for preprocessing.

Assuming that the quantization bits of the image are 8 bits,color channel transfer takes place in the CIE L*a*b*color space,where L represents the brightness of the pixel,with a range of values of[0,100],representing colors from pure black to pure white.The value of a ranges from red to green[127-128]and that of b ranges from yellow to blue.Fig.10 shows a schematic diagram of the CIE L*a * b * color space.The axes along it have little correlation among them.Thus,different operations can be applied to different color channels without incurring cross-channel artifacts.

Fig.10.Schematic diagram of CIE L* a * b * color space.

Color channel transfer is carried out in three steps.First,we subtract the average value of the original image.Second,the image is readjusted according to the ratio of the standard deviation of the original image to that of the reference image.Finally,the average value of the reference image is added to the result of above operation.Then color channel transitions can be expressed in the CIE L*a *b * color space as

The advantage of color channel transfer in the CIE L*a*b*color space is that the loss of color can be automatically compensated for without needing to estimating the direction of color loss.This is because in the CIE L*a *b * color space,information on red-green and blue-yellow chromaticity is mixed,while in the RGB space,information on these three colors is independent.Adjusting the mean values of a and b according to the appropriate reference image introduces a color shift in the two coaxial colors.That is,the attenuation in the R or G channels can be compensated for by adjusting the red-green color shift,and the attenuation of the B channel can be compensated for by adjusting the blue-yellow color shift.

To compensate for the high attenuation channels of foggy images at night and eliminate biased colors,an effective reference image needs to be established

whereG(x)is a uniform grayscale image,white is fixed in(0,0,0)in the CIE L*a*b*color space,and moving the average value of color channels a and b to zero can yield tonal correction without changing the mean value of channel L because this value affects the brightness of the image.D(x)is the detail layer of the initial image,S(x)is its significance coefficient,andI(x)is the initial image.

Iωhcis a Gaussian kernel(5×5)of the blurred version of the initial image,Iμis the mean vector of the initial image,Iωhc(x)is the vector at thexposition of the Gaussian blur image of the initial image,and‖·‖is the norm.

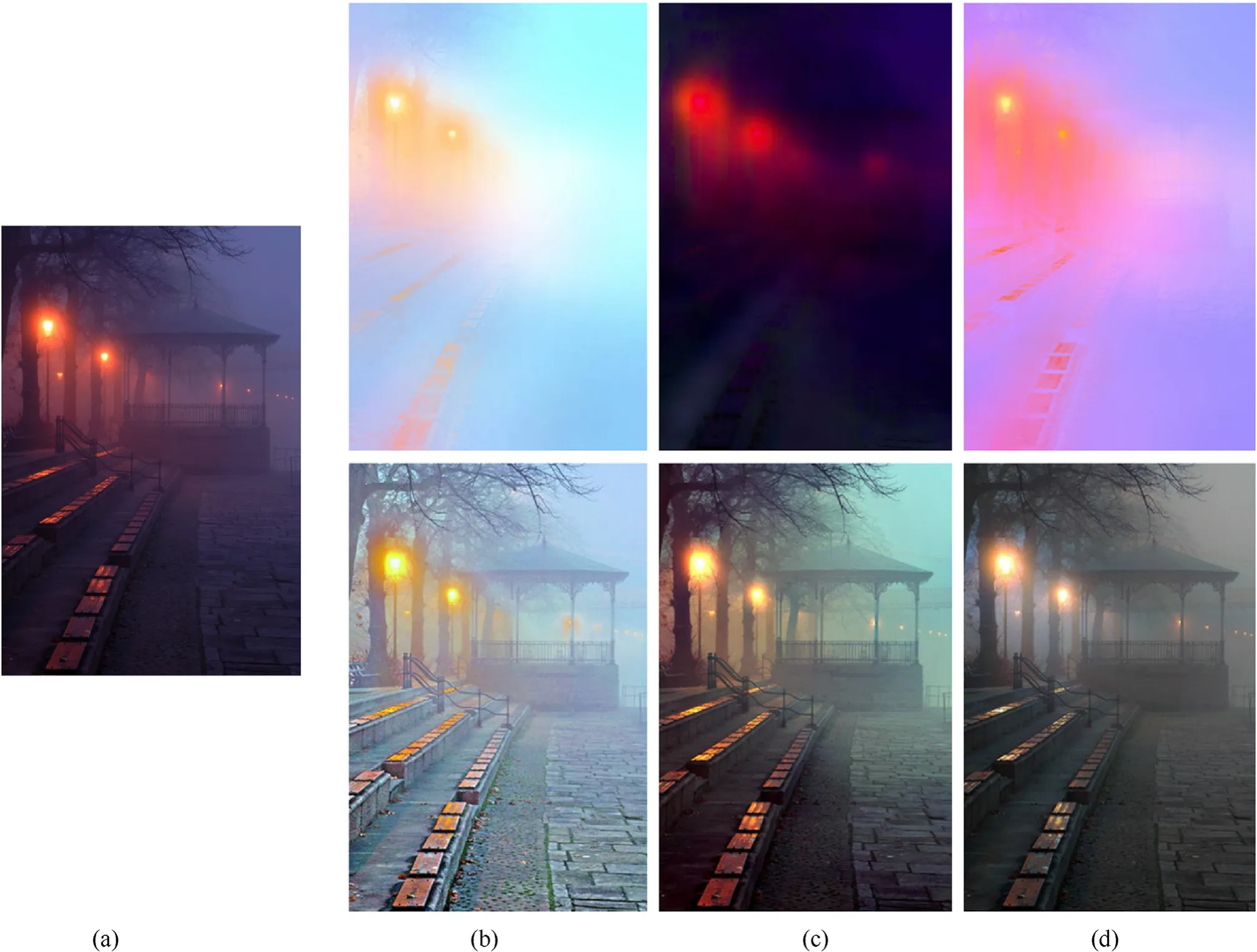

Each link and effect of color channel transfer are shown in Fig.11.The uniform gray imageG(x)can preliminarily correct the color of the nocturnal image,the detail layer contains details of the original image,and the significance coefficient considers the color change in significant areas.Then,the reference image is obtained by combining the above factors.Finally the initial image is shifted in the color channel according to the direction of the reference image in CIE L*a*b*color space.The result of color channel shift renders the luminance of the image closer to natural lighting conditions.

Fig.11.Color channel transfer: (a) Initial image;(b) Uniform grayscale image (G(x));(c) Detail layer (D(x));(d) Significance coefficient (S(x));(e) Reference image (R(x)),and (f)results of color channel transfer.

Fig.12 shows the image and histogram distribution before and after color channel transfer.It is clear that the high attenuation channel R is compensated for in the example.

Fig.12.Results of compensating for the high attenuation channel:(a)Foggy image acquired at night;(b)RGB channel and histogram distribution of(a);(c)Result of color channel transfer;(d) RGB channel and histogram distribution of (c).

3.2.Estimating spatial variation in atmospheric light

In the traditional model,the ambient illumination is assumed to be spatially consistent such that the atmospheric lightA∞in the daytime image is the same at each pixel of the three channels,and this is used to remove fog.However,nocturnal images usually have artificial light sources of multiple colors,such as street lamps,neon lights,and car lights,that are uneven.Therefore,it is important to estimate the effect of defogging of atmospheric light on images acquired at night by considering the characteristics of ambient lighting at night.We combine the relaxed atmospheric light model[13] and the maximum reflection prior [27],and use the fast maximum reflection prior to estimate the atmospheric light of foggy images acquired at night.

According to the relaxed atmospheric light model,the model of foggy images at night can be defined as

Here,A∞changes intoA(x),which reflects the spatial change in lighting in images acquired at night,that is,the atmospheric light changes with the positions of the pixels.

To further reflect the lighting characteristics of different colors of images acquired at night,Ref.[27] rewrote the above as

We decompose the atmospheric light term into the product of intensity and color distribution

Zhang et al.[27] examined blocks of clear images acquired during the day,and found that each color channel had a very high intensity at some pixels.That is,the maximum intensity of each color channel had a high value.For an image,this can be expressed as

The incident intensity of light for clear images acquired during the day is uniformly distributed in space,and can be assumed to have a fixed value of 1.Thus,pixels with the highest local intensity on a particular color channel mainly correspond to objects or surfaces with high reflectivity on the corresponding color channel.Therefore,Eq.(14) is equivalent to

Areas of objects and surfaces with the highest reflectivity mainly include white (gray) or mirror areas,such as the sky,road,windows,and water,and surfaces of different colors,such as sources of light,flowers,billboards,and people.Thus,for most image blocks without fog acquired during the day,the maximum intensity of each color channel is one,that is,≈1.The above observations are called maximum reflectivity priors.To demonstrate the authenticity of the prior,Zhang et al.[27]calculated the histogram of intensity of 50,000 maximum reflectance images.To intuitively explain the calculation of the maximum reflectance distribution(),Fig.13 uses an image block as an example.The V channel is normalized in HSV space,and the maximum reflectance is then calculated in RGB channel of the image block.A maximum reflectivity of one for each channel does not require a single white pixel.A significant number of pixels in each color channel shown have the maximum reflectivity.These pixels are usually objects that are white,gray,or have different colors,such as clothes,flowers,forests,and road surfaces.

Fig.13.Schematic diagram of maximum reflectivity of image block: (a) Initial image block;(b) Normalized image block of channel V;(c) Pixel of channel R with the maximum reflectivity;(d) Pixel of channel G with the maximum reflectivity;(e) Pixel of channel B with the maximum reflectivity.

3.3.Estimating spatial variation in transmittance of atmospheric light based on DehazeNet

Classical defogging methods require certain prior assumptions(such as the dark channel prior [5] and color prior [4]).Extracting these features is equivalent to a convolution of the image followed by non-linear mapping.Deep learning-based defogging that uses a convolutional neural network has attracted considerable attention in recent years.However,owing to a lack of datasets for this,not many deep learning methods are suitable for removing fog from images acquired at night.Considering that the foggy image acquired at night after color channel transfer is relatively similar to that acquired in the daytime,we can estimate the transmittance under night fog by improving the lightweight DehazeNet network.

DehazeNet is a trainable end-to-end system based on the convolutional neural network,and was proposed by Cai et al.[6].Its network structure is shown in Fig.14,and consists of a cascaded convolutional layer,a pooling layer,and a non-linear activation function.Each layer is designed according to the established assumptions/priors in image defogging.We obtain the transmittance of each point through feature extraction,multi-scale mapping,calculating the local extremum,and non-linear regression.

Fig.14.Structure of DehazeNet network.

Step 1.Feature extraction

Inspired by the idea of taking the extreme value of the color channel in the classical color prior[4],the first layer of DehazeNet is composed of the maxout element.The maxout function [25] is a feedforward non-linear activation function.The maxout element maximizes k affine feature images in a pixel-by-pixel manner to generate a new feature image.The output response is as follows:

We choose the multi-scale convolution operation in the second layer of DehazeNet.The size of the convolution kernel is 3×3,5×5,7×7,The same number of convolution kernels are used for these three scales,and the output response is as follows:

Step 2.Local extremum access

According to the classical architecture of the CNN [26],local sensitivity can be overcome by considering the neighborhood maximum at each pixel.Considering that the local extreme satisfies the assumption that the transmittance is constant in the local area,this value for the third layer of DehazeNet is calculated as follows:

In the above formula,Ω(x)is the neighborhood of sizef3×f3centered around the x-axis.The number of dimensions of the output of the third level isn3=n2,and the local extremum operation preserves the resolution of the feature image.

Step 3.Non-linear regression

For the removal of foggy images acquired at night,the output value of the last layer should be over a small range,and should have upper and lower bounds.This is a regression problem,and so we use the bilateral rectified linear unit activation in DehazeNet based on BReLU,and the output of the fourth layer is defined as:

In the above equation,W4={W4}contains a convolution kerneln3×f4×f4andB4={B4}contains a bias.tmax,tminrepresents the boundary value of the BReLU.Because the transmittance value is in the interval [0,1],we choosetmin=0,tmax=1.

Step 4.Network training

It is difficult to obtain clear and foggy images at night.We use synthetic data based on the model of foggy images,and perform color channel transfer to compensate for the high attenuation channel in images acquired at night.The essential difference between foggy images acquired during the day at night is in the global uniformity of atmospheric light.DehazeNet acts as a network of foggy images and transmittance.The network itself does not estimate atmospheric light.It can thus be trained by directly using synthetic foggy-clear image pairs acquired during the day.The foggy image is mapped in terms of transmittance by minimizing the loss function between the transmittance of the medium and the true transmittance output by the network.We use the meansquared error as objective function and stochastic gradient descent to optimize the loss.

4.Experimental results and analysis

4.1.Experiment setup

To demonstrate the effectiveness of the proposed method,we compared it with typical methods to remove fog from images acquired at night,including the method in Ref.[8],the method based on the polychromatic light model [10],the method based on the maximum reflection prior model [27],the method based on FFANet (Feature fusion attention network for single image dehazing in Ref.[29])and the method based on PSD(Principled Synthetic-to-Real Dehazing Guided by Physical Priors Principled Synthetic-toreal Dehazing in Ref.[30]).Objective and subjective performance evaluations were performed on the same test images.The selected dataset was due to Li [23],and contained more than 130 foggy images acquired at night that had been collected from the Internet.Some typical examples are shown in Fig.15.The experiment was divided into four parts: the experiment to eliminate the influence of ambient lighting,that on synthetic foggy images,that on foggy images acquired at night,and the experiment on color channel transfer and the ablation experiment of color channel transfer and space change atmospheric optical module.The peak signal-to-noise ratio (PSNR),structural similarity index (SSIM) [16],and color evaluation Index (CIEDE2000) were used for the objective assessment of the results [24].The Natural Image Quality Evaluator(NIQE) [17],Patch-based Contrast Quality Index (PCQI) [18],Blind/Referenceless Image Spatial QUality Evaluator (BRISQUE) [19],and Perception-based Image Quality Evaluator(PIQE)[20]were used to quantitatively evaluated the performance of each method in terms of removing fog from the images.

Fig.15.Estimating the influence of eliminating ambient lighting on the foggy image:(a) Initial map of night fog;(b)Image defogging method based on a new imaging model;(c)Defogging method based on multi-color luminescence;(d)The proposed method(the upper part shows the ambient lighting estimated by the corresponding method and the lower part shows the result of eliminating ambient lighting).

4.2.Experiment on eliminating the influence of ambient lighting

As night scenes usually feature sources of artificial light of multiple colors,it is important to consider the characteristics of ambient lighting to remove fog from images acquired at night.To illustrate the effects of the proposed color channel transfer combined with the optical module to estimate the spatial variation in atmospheric lighting on eliminating the influence of environmental lighting,First,the defogging results for eliminating the influence of environmental lighting are presented and compared with the nighttime image defogging method based on the new imaging model [10] and the nighttime image defogging method based on the multicolor luminescence model [10].The results are shown in Fig.15.

Fig.15 shows that the method proposed in Ref.[8] introduced color artifacts,whereas the defogging method in Ref.[8],based on the multi-color luminescence model,tended to lighten parts of the image,like the region occupied by the sky.The results of the proposed method were more natural.

4.3.Experiment on removing fog from synthetic images acquired at night

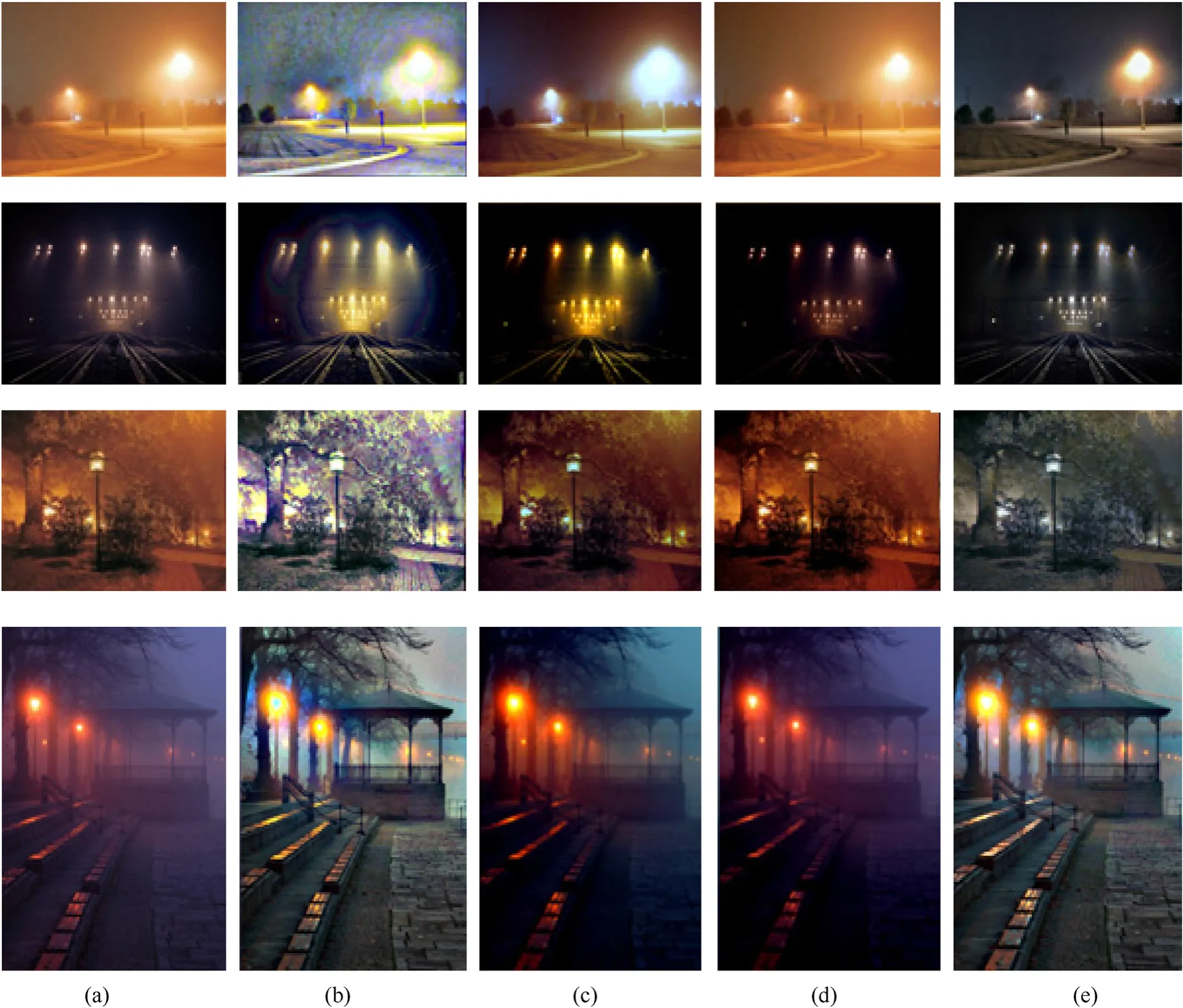

To quantitatively verify the effectiveness of the proposed method,we conducted experiments on synthesized foggy images acquired at night according to the model in Section 2.1.The results of comparisons with the defogging methods proposed in Refs.[8,10,29,30] are shown in Fig.16 and Fig.17,respectively.

Fig.16.Comparison of methods on synthetic nighttime foggy images,Experiment 1: (a) Reference image;(b) Composite foggy image at night;(c) Defogging method proposed in Ref.[8];(d) Image defogging method based on the multi-color luminescence model in Ref.[10];(e) Image defogging method based on FFA-Net in Ref.[29];(f) Image defogging method based on PSD in Ref.[30];(g) The proposed method.

Fig.17.Comparison of methods on synthetic foggy images acquired at night,Experiment 2: (a) Reference image,(b) Composite foggy image;(c) Defogging method proposed in Ref.[8];(d) Image defogging method based on the multi-color luminescence model in Ref.[10];(e) Image defogging method based on FFA-Net in Ref.[29];(f) Image defogging method based on PSD in Ref.[30];(g) The proposed method.

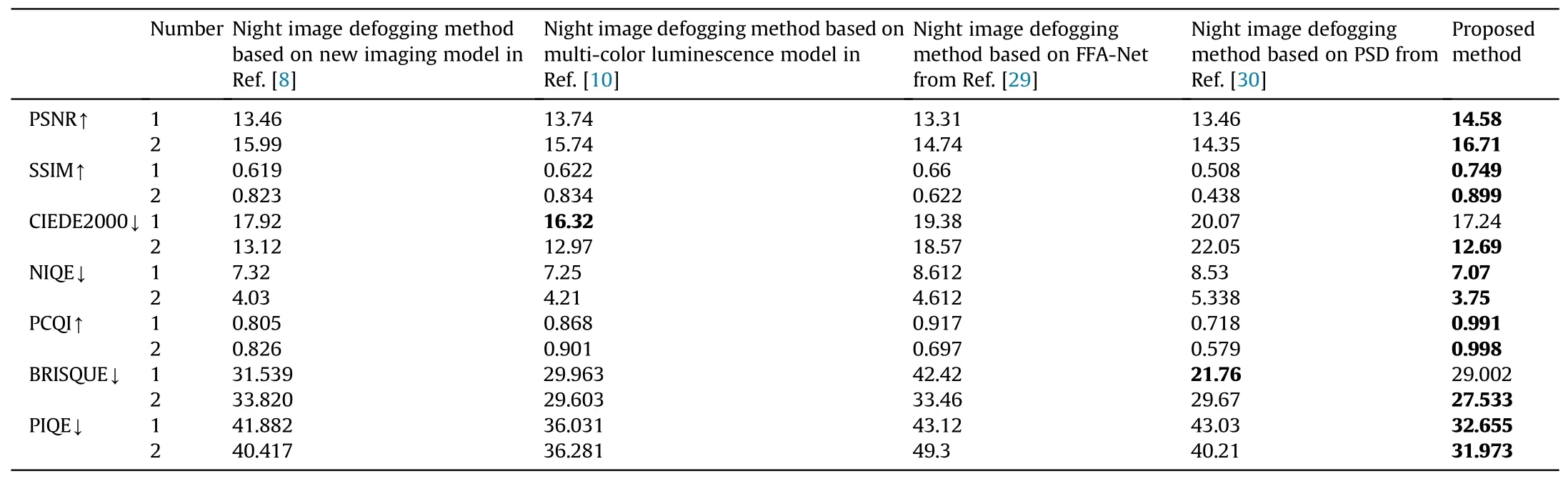

Table 1 shows a quantitative comparison of the methods in terms of the PSNR,SSIM,CIEDE2000,NIQE,PCQI,BRISQUE,and PIQE.The proposed DehazeNet method yielded results similar to the reference image in terms of color and illumination.

Table 1 Assessment of quality of defogging results on synthetic foggy images acquired at night.

4.4.Experiment on removing foggy images acquired at night

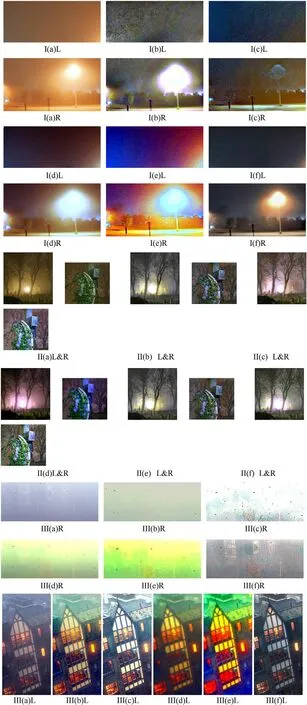

To verify the performance of the proposed method,foggy images(with the number I-VII)acquired at night are used,as shown in Fig.18.The detailed image contents inside the red boxes of test image I -III of Fig.18 are enlarged in Fig.19.

Fig.18.Comparison of methods on foggy images acquired at night: (a) Foggy image acquired at night;(b) Results of the method proposed in Ref.[8];(c) Results of the polychromatic luminescence model in Ref.[10];(d) Results of FFA-Net in Ref.[29];(e) Results of PSD in Ref.[30];(f) Results of the proposed method.

Fig.19.The enlarged image details inside the red boxes in Fig.18 (suffixes with ‘L'means the left red box,‘R'means the right red box):(a)Foggy images acquired at night;(b) Results of the method proposed in Ref.[8];(c) Results of the polychromatic luminescence model in Ref.[10];(d) Results of FFA-Net in Ref.[29];(e) Results of PSD in Ref.[30];(f) Results of the proposed method.

The method proposed in Ref.[8] could not deal with color distortion,and produced exaggerated intensity and color in some areas.The method based on the multi-color luminescence model[10]tended to over-magnify the color around the edges,resulting in color edge artifacts,especially in the region occupied by the sky and the area around the light source.The methods based on FFA-net in Ref.[29] and PSD in Ref.[30] have serious color deviations.The proposed DehazeNet could correct for color distortion,enhance visibility,and obtain more natural images with fog removed from them.The magnified comparison in Fig.19 shows that compared with the methods proposed in Refs.[8,10,29,30] the proposed method generated more natural results.Compared with the overexposure and deviation in the color of these methods,the proposed method processed glow and incurred only a slight color deviation at the light source.It thus yielded a better color balance and clearer visibility in the area around the street lamp,as shown in Fig.20.

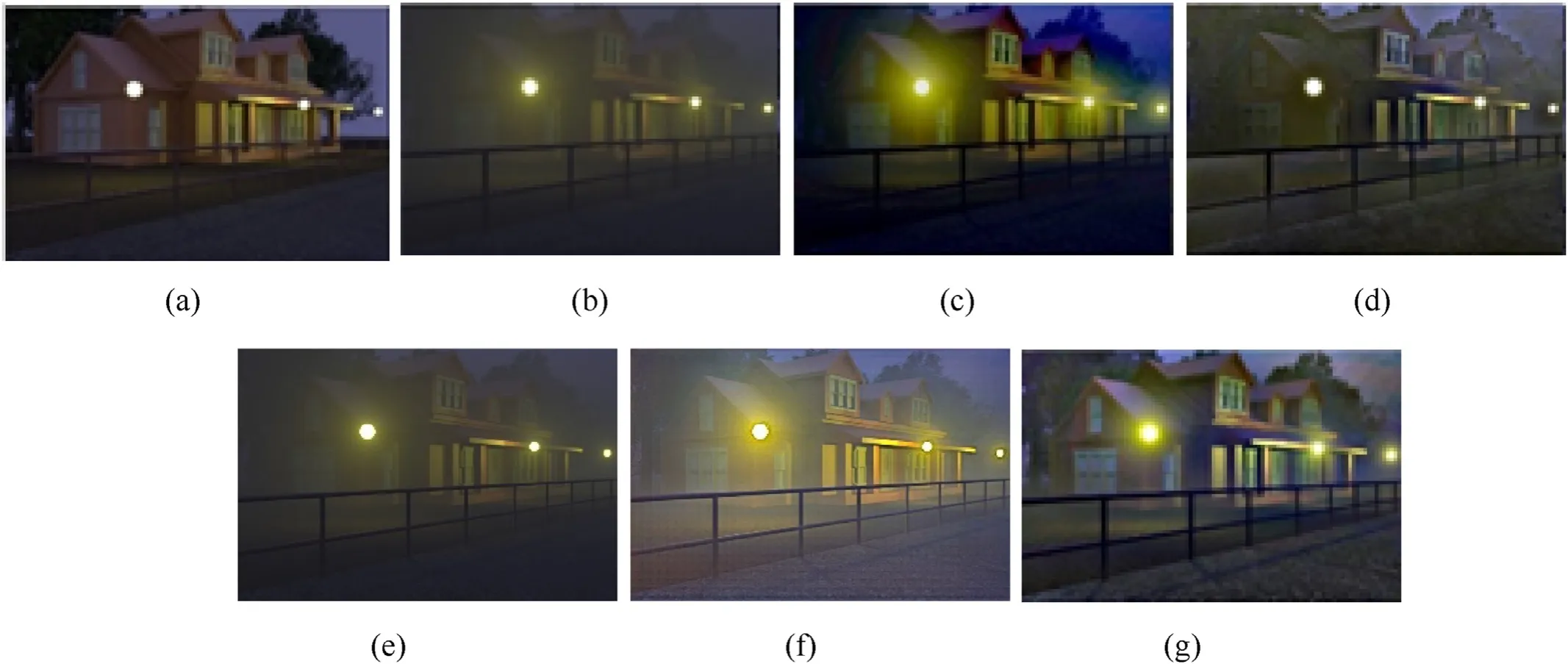

Fig.20.Results of ablation experiment: (a) Foggy image at night;(b) Experiment A;(c) Experiment B;(d) Experiment C;(e) The proposed method.

To quantitatively compare the effectiveness of several typical image defogging methods with the proposed DehazeNet,the NIQE was used to evaluate their results of defogging.Table 2 shows the average NIQE values on 28 foggy images acquired at night collected from Flickr.The results show that the proposed method was superior to the other methods in terms of removing fog from images acquired at night.

Table 2 NIQE results of the methods on foggy images acquired at night.

4.5.Ablation experiment

For this experiment,the system was implemented in MATLAB 2020a on a laptop with i5 quda-core 2.30 GHZ,6 GB of RAM,and 64-bit Windows 7.

The proposed method is composed of three parts: the color channel transfer module,DehazeNet fog removal network,and optical module to calculate the spatial variation in atmospheric lighting.The first two modules are designed to compensate for the high attenuation channel of the foggy image acquired at night,and for the uneven and multi-color environmental lighting by considering its characteristics.We conducted an ablation experiment to verify the necessity of these two modules in DehazeNet.

The experiment consisted of three parts

(1) Without preprocessing the image based on color channel transfer,DehazeNet was used directly to estimate transmittance,and the variation in atmospheric lighting was then estimated by the other module.The result was recorded as experiment A.

(2) After color channel transfer-based preprocessing,DehazeNet was used to estimate transmittance,and global uniform estimation of atmospheric light was used [5] to obtain the results of fog removal.This was recorded as experiment B.

(3) Without preprocessing using color channel transfer,DehazeNet was directly used to estimate transmittance,and global uniform estimation of atmospheric light was used[5].The result was recorded as experiment C.

Fig.20 shows the results of the ablation experiment.

A comparison of Fig.20(b),with Fig.20(e) and Fig.20(c),and Fig.20(d) shows that the color channel transfer module could compensate for the high attenuation channel,and helped restore a more natural and color-balanced image.A comparison Fig.20(c)with Fig.20(e),and that of Fig.20(b)with Fig.20(d)shows that the module to estimate the spatial variation in atmospheric lighting could remove glow,compensate for uneven and different colors of the environmental lighting,and correct the color distribution.This helped remove fog and improve visibility.A comparison of Fig.20(d) and Fig.20(e) shows that the DehazeNet defogging network without the two modules did not yield satisfactory results.Therefore,the color channel transfer module and the module to estimate the changes in atmospheric lighting are indispensable to DehazeNet.

To quantitatively evaluate the necessity and function of the color channel transfer module and the module to estimate the spatial variation in atmospheric light,NIQE was used to evaluate the results of ablation.Table 3 shows the algorithm that used both module to remove fog from images acquired at night delivered the best performance.The absence of either module degraded performance,and the module to estimate the spatial variation in atmospheric light had a more significant impact on the results.

Table 3 Results of NIQE for an ablation experiment on foggy images acquired at night.

5.Conclusions

This study used the statistical characteristics of foggy images acquired at night,especially the difference between foggy images acquired during the day and at night,to propose,DehazeNet,a single-frame fog removal method based on color channel transfer and estimated spatial variation in atmospheric light.The aim was to defog images acquired at night under complex ambient lighting.Color channel transfer was designed to compensate for the high attenuation channel of foggy images acquired at night,a deep convolutional network was used to estimate the distribution of transmittance,and atmospheric light was estimated point by point according to the maximum reflection prior.Comparative experiments involving other defogging methods showed that the proposed method can better deal with the high attenuation channel,and can remove glow due to multi-color,non-uniform environmental lighting.An ablation experiment was used to verify the necessity of the color channel transfer module and the module to estimate the spatial variation in atmospheric light for the proposed method.

Declaration of competing interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Acknowledgments

This work was supported by a grant from the Qian Xuesen Laboratory of Space Technology,China Academy of Space Technology (Grant No.GZZKFJJ2020004),the National Natural Science Foundation of China(Grant Nos.61875013 and 61827814),and the Natural Science Foundation of Beijing Municipality (Grant No.Z190018).

- Defence Technology的其它文章

- Structural design of the fluted shaped charge liner using multi-section optimization method

- An aerial ammunition ad hoc network collaborative localizationalgorithm based on relative ranging and velocity measurement in a highly-dynamic topographic structure

- Molecular simulation study of the stabilization process of NEPE propellant

- Damage assessment of aircraft wing subjected to blast wave with finite element method and artificial neural network tool

- Experimental and numerical studies of titanium foil/steel explosively welded clad plate

- Effects of connection types and elevated temperature on the impact behaviour of restrained beam in portal steel frame